How To Draw Line Wiht Robot Movment In Vrep

Simple Foraging and Random Aggregation Strategy for swarm robotics without advice¶

In swarm robotics Foraging and Aggregation are basic tasks yet that tin be challenging when there is no advice between the robots. This paper proposes a strategy using a Mealy Deterministic Finite Land Machine (DFSM) that switches between five states with two dissimilar algorithms, the Rebound avoider/follower through proximity sensors, and the Search blob/ePuck using the 2D image processing of the ePuck embedded photographic camera. Ten trials for each scenario are simulated on 5-rep in social club to analyse the functioning of the strategy in terms of the mean and standard deviation.

Pre-requisites¶

- Basic robotics theory.

- Finite State Machine theory.

- V-rep proEdu v3.half-dozen

- Cognition in Lua progamming language.

Source lawmaking¶

Version PDF/HTML. V-rep and LaTex source code on GitHub.

Problem formulation¶

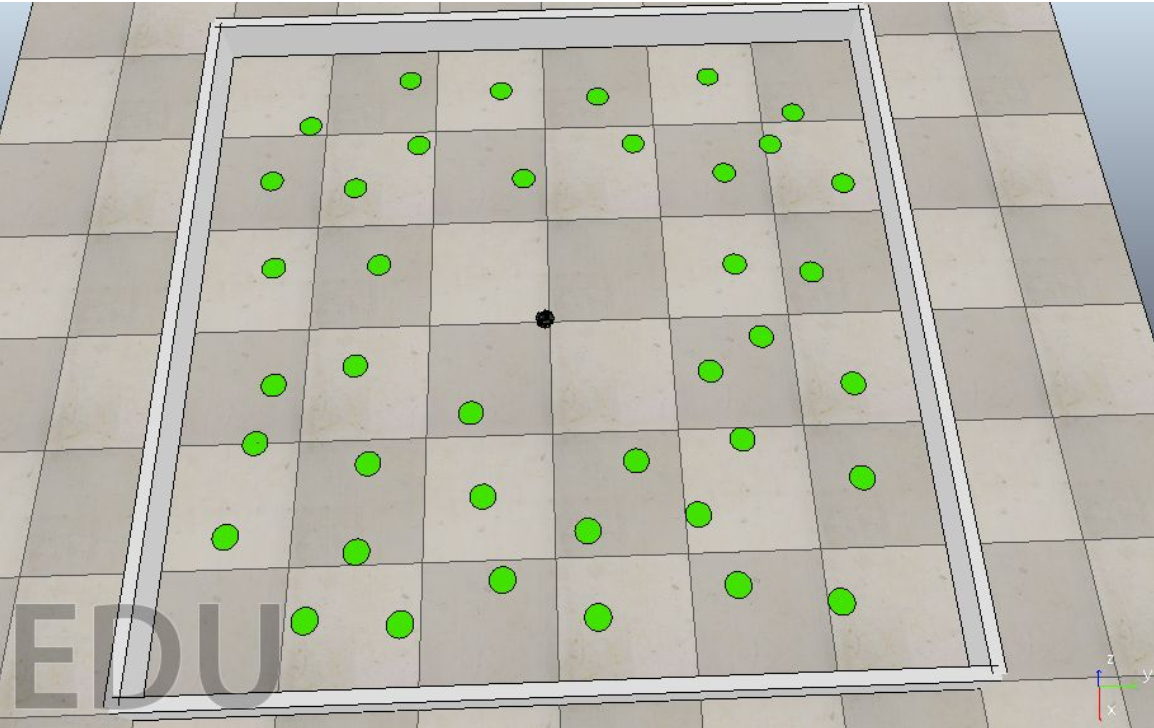

The task is to design a control strategy for e-puck robots that do the following:

- Explore the given environment to collect resources (foraging);

- While foraging, avoid collisions between robots and with the environment boundary.

For an object to be nerveless, a robot's middle must be within 5 cm of the object'due south middle. At that place won't be any collisions between the robot and the object. For the evaluation of this task, ii foraging scenarios will be considered:

- With a unmarried robot;

- With a grouping of five robots (all with an identical controller).

The controller used for both scenarios MUST be the same.

To assess the foraging functioning of the strategy, information technology'southward expected to conduct ten trials per scenario. Each trial should last threescore seconds of simulation time. Bear witness the number of objects collected in total over time (boilerplate and standard difference over x trials). Include one plot for each scenario.

Important:

- Do not use wheel speeds the e-puck cannot achieve. That is, when using function

sim.setJointTargetVelocity(.,.), make sure the velocity argument is bounded past[-half dozen.24, six.24]. - You should use the sensors available on the e-puck platform (due east.chiliad. camera, proximity). You may implement additional sensors, however, these must not provide any global data (e.g. accented position or orientation).

Foraging and Random Aggregation¶

The DFSM diagram in Fig. 1, which is defined past (i) and (2), starts in the Behaviour land where the robot is set as $\textit{avoider}$ while the simulation time is $t\leq 60[due south]$. During that time, the Foraging state looks for the greenish blobs with the Search hulk/ePuck algorithm while avoiding obstacles using the Rebound algorithm. Moreover, a Random Movement state is used to introduce randomness to the organisation so the agent tin can take different paths if there is no hulk or obstruction detection. For $60<t\leq 120$, the Behaviour of the robot is prepare to $\textit{follower}$ and switches to Random Aggregation land where information technology uses both algorithms, the Rebound to follow ePucks with the proximity sensors and the Search to await for the closest ePuck wheels. For both algorithms, the output is the bending of attack $\alpha_n$, where $northward$ depends on the current state.

\begin{align*} S&=\lbrace B,F,R,A,Ra \rbrace \tag{1}\\ \varSigma&= \lbrace t\leq sixty,60<t\leq 120,bl~\exists,bl~\nexists,ob~\exists,ob~\nexists,eP~\exists, eP~\nexists \rbrace \tag{2}\\ s_0&=\lbrace B \rbrace \\ \end{align*}

where, $S$ is the finite set of states, $\varSigma$ is the input alphabet, $\delta:S\times\varSigma$ is the state transition role, Table 1, $s_0$ is the initial land, $\exists$ and $\nexists$ mean detection and no detection respectively.

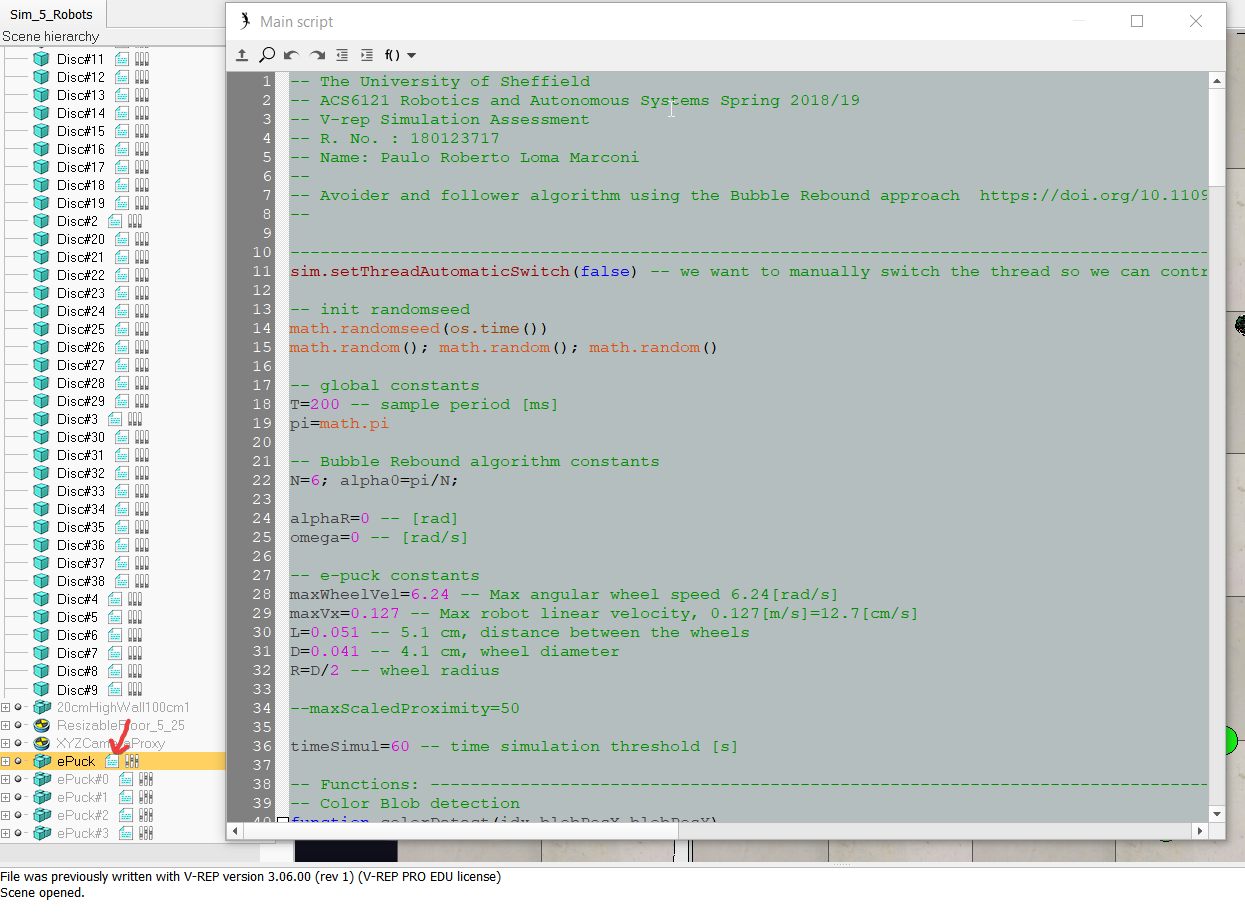

Unicycle model¶

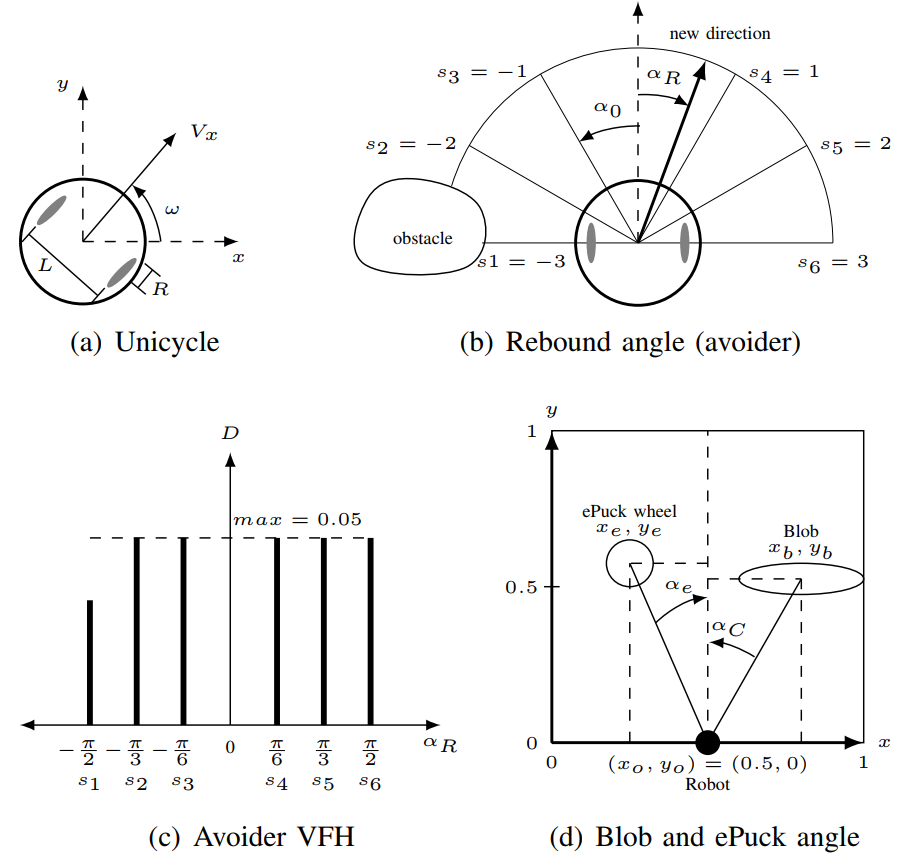

The Unicycle model in Fig. 2a [1] controls the angular velocities of the right and left wheels, $v_r$ and $v_l$ equally follows,

\begin{align} v_r&= \dfrac{2~V_x+\omega~L}{2~R} \tag{iii}\\ v_l&=\dfrac{2~V_x-\omega~L}{2~R} \tag{4} \end{align}

where, $V_x$ is the linear velocity of the robot, $Fifty$ is the altitude between the wheels, $R$ is the radius of each wheel, and $\omega$ is the angular velocity of the robot. Using $\alpha_n$ and the simulation sampling flow $T$, the control variable for the simulation is $\omega=\alpha_n/T$, refer to code line 24, 197, and 215.

Rebound avoider/follower algorithm¶

The Rebound algorithm [two] calculates the Rebound bending $\alpha_R$ to avoid/follow an obstruction/objective given $\alpha_0=\pi/N$ and $\alpha_i=i~\alpha_0$,

\begin{align}\tag{5} \alpha_R&=\dfrac{\sum_{i=-North/2}^{North/2}~\alpha_i~D_i}{\sum_{i=-N/2}^{N/2}~D_i} \end{marshal}

where, $\alpha_0$ is the uniformly distributed angular pace, $N$ is the number of sensors, $\alpha_i$ is the angular information per step $\alpha_i~\epsilon\left[-\frac{N}{two},\frac{N}{2}\right]$, and $D_i$ is the distance value obtained by the proximity sensors, refer to code line eighteen and 139.

The weight vector given by $\alpha_i$ sets the robot behaviour for each respective mapped sensor $\lbrace s_1,s_2,s_3,s_4,s_5,s_6\rbrace$. For the $\textit{avoider}$ is $\lbrace -three,-2,-1,1,ii,3 \rbrace$, and for the $\textit{follower}$ is $\lbrace 3,two,1,-1,-2,-three \rbrace$. Fig. 2b and Fig. 2c show an example of $\alpha_R$ with the Vector Field Histogram (VFH) for the $\textit{avoider}$ example. Refer to lawmaking line 128 and 132.

Search blob/ePuck algorithm¶

The ePuck embedded camera on V-rep is a vision sensor that filters the RGB colours of the blobs and other ePucks. Non collected Blobs are mapped as green and nerveless ones as scarlet, and the ePuck wheels are also mapped considering they have dark-green and scarlet parts, refer to code line 97. The data of involvement that this sensor outputs are the size, centroid's 2d position, and orientation of the detected objects. Therefore, when objects are detected past the photographic camera, a simple routine finds the biggest ane which is the closest relative to the ePuck, and using (half dozen) and (7), it can be calculated the bending of attack $\alpha_C$ or $\alpha_e$ for the blobs and ePucks respectively, refer to Fig. 2d and code line 150. The orientation value is used to differentiate between objects, for blobs is $=0$ and for ePuck wheels is $\neq 0$, refer to lawmaking line 105.

\begin{align} \alpha_C &= \arctan \dfrac{x_b-x_o}{y_b-y_o} \tag{half dozen}\\ \alpha_e &= \arctan \dfrac{x_e-x_o}{y_e-y_o} \tag{7} \stop{align}

where, $(x_o,y_o)$, $(x_b,y_b)$, and $(x_e,y_e)$ are the robot, blob and another ePuck bike relative position in the 2d paradigm. In the Random state, either the robot is foraging only does not see any blobs or is aggregating but there is no other ePuck nearby, (6) and (seven) are modified with a random value $w$ with a probability function $P$,

\brainstorm{marshal} \alpha_{C_r} &= \alpha_C~w \tag{8}\\ \alpha_{e_r} &= \alpha_e~west \tag{9} \end{align}

where, $P(\lbrace westward~\epsilon~\Omega:X(westward)=one/3 \rbrace)$ and $\Omega=\lbrace -one,0,1 \rbrace$, refer to lawmaking line 158 and 205.

Lua code of the algorithm¶

Edit the controller¶

To edit the controller for all the ePuck, open 5-rep and load the scene sim_5_Robots.ttt, and edit the ePuck file.

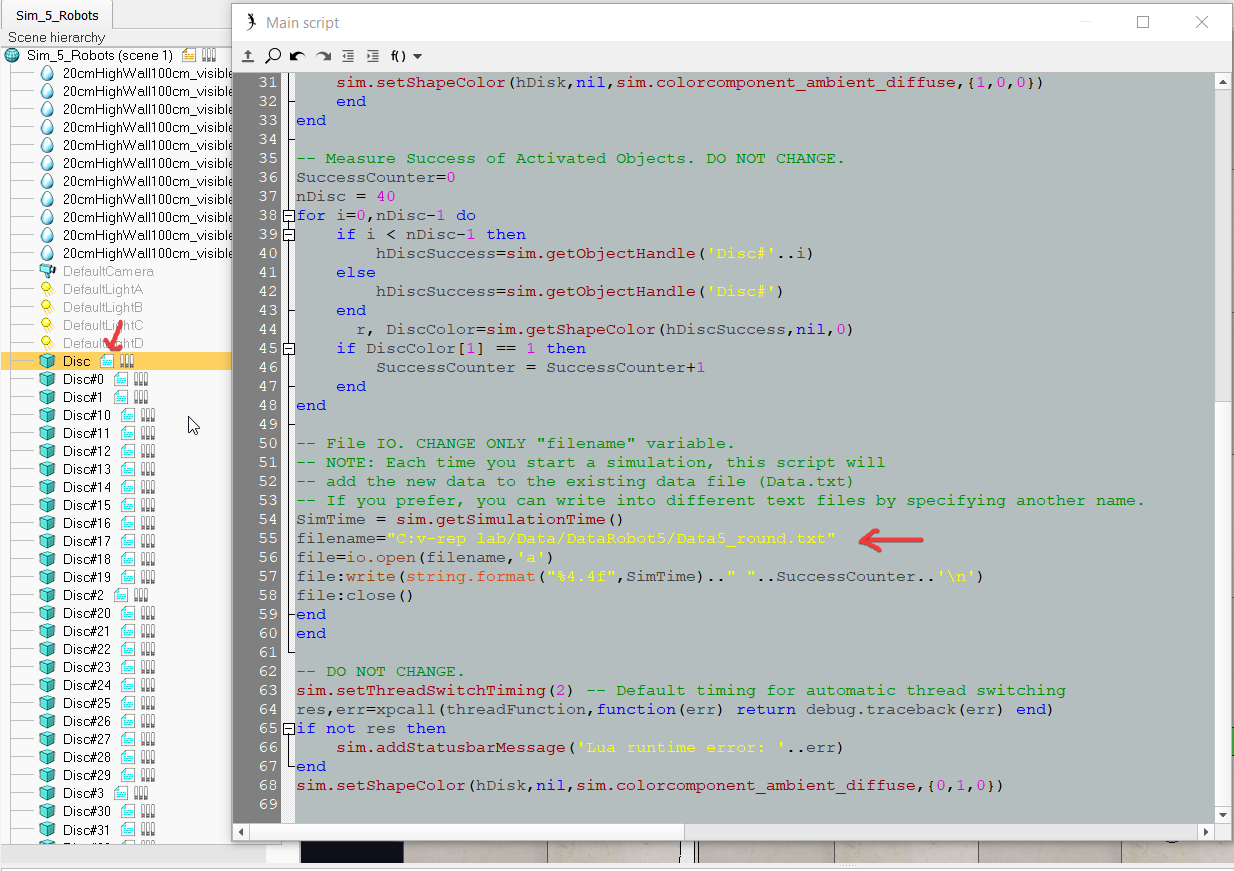

Save the output data¶

To edit the location of the output data, open the disc file.

References¶

[1] Jawhar Ghommam, Maarouf Saad, and Faical Mnif. "Formation path following control of unicycle-type mobile robots". In: 2008 IEEE International Briefing on Robotics and Automation. IEEE, 2008. DOI: 10.1109/robot.2008.4543495.

[2] I. Susnea et al. "The bubble rebound obstacle abstention algorithm for mobile robots". In: IEEE ICCA 2010. IEEE, 2010. DOI: 10.1109/icca.2010.5524302.

Source: https://paulomarconi.github.io/blog/Foraging_Aggregation_V-rep_e-puck/

Posted by: gintherwascond.blogspot.com

0 Response to "How To Draw Line Wiht Robot Movment In Vrep"

Post a Comment